machine learning features vs parameters

If the resulting parameters determined by the nested cross validation converged and were stable then the model minimizes both variance and bias which is extremely useful given the normal biasvariance tradeoff which is normally encountered in statistical and machine learning. In datasets features appear as columns.

Data Science Free Resources Infographics Posts Whitepapers Machine Learning Artificial Intelligence Data Science Learning Data Science

This dataset contains for every flower its petal l.

. The output of the training process is a machine learning model which you can. Feature Variables What is a Feature Variable in Machine Learning. In a machine learning model there are 2 types of parameters.

The relationships that neural networks model are often very complicated ones and using a small network adapting the size of the network to the size of the training set ie. Supervised Learning In supervised learning the target is already known and is used in the model prediction. These are the fitted parameters.

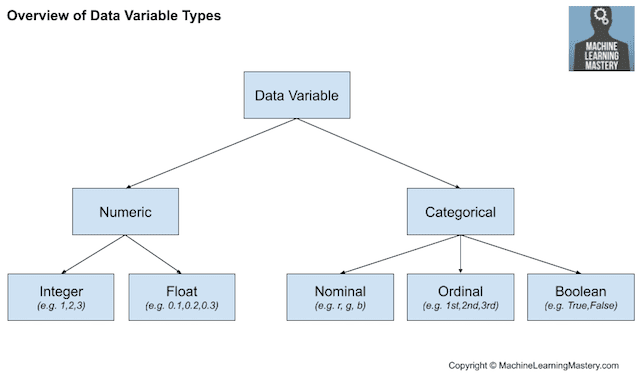

Machine Learning vs Deep Learning. What is Feature Selection. The features are the variables of this trained model.

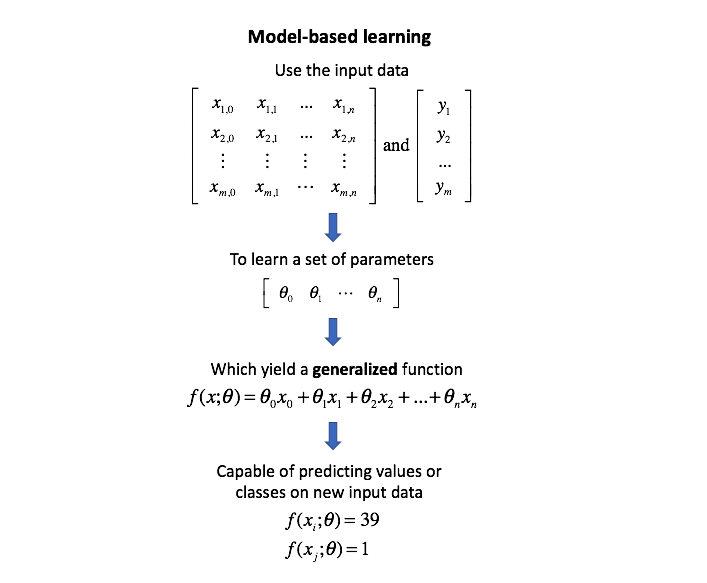

Machine learning is about learning one or more mathematical functionsmodels using data to solve a particular task. The parameters that provide the customization of the function are the model parameters or simply parameters and they are exactly what the machine is going to learn from data the training features set. Hyperparameters solely depend upon the conduct of the algorithms when it is in the learning phase.

The model decides which cars must be crushed for. For example suppose you want to build a. In a ML problem features are the variablesdimensions which represent a certain measurevalue for all your data points in your dataset.

A feature is a measurable property of the object youre trying to analyze. Function quality and quality of coaching knowledge. Answer 1 of 3.

This holds in machine learning where these parameters may be estimated from data and used as part of a predictive model. The obvious benefit of having many parameters is that you can represent much more complicated functions than with fewer parameters. It is of the utmost importance to collect reliable data so that your machine learning model can find the correct patterns.

With things like naive bayes you can have much much more features. In this case a parameter is a function argument that could have one of a range of values. Making your data look big just by using.

Model parameters contemplate how the target variable is depending upon the predictor variable. You can have more features than samples and still do fine. 2Validation set is a set of examples that cannot be used for learning the model but can help tune model parameters eg selecting K in K-NN.

Consider a table which contains information on old cars. The answer is Feature Selection. The more data you feed your system the better it will be at learning.

In the above expression T stands for the task P stands for performance and E stands for experience past. Any machine learning problem can be represented as a function of three parameters. Noise within the output values.

Model Parameters vs Hyperparameters. This is usually very irrelevant question because it depends on model you are fitting. And can extract higher-level features from the raw data.

As with AI machine learning vs. The image above contains a snippet of data from a public dataset with information about passengers on the ill-fated Titanic maiden voyage. We can easily calculate it by confusion matrix with the help of following formula.

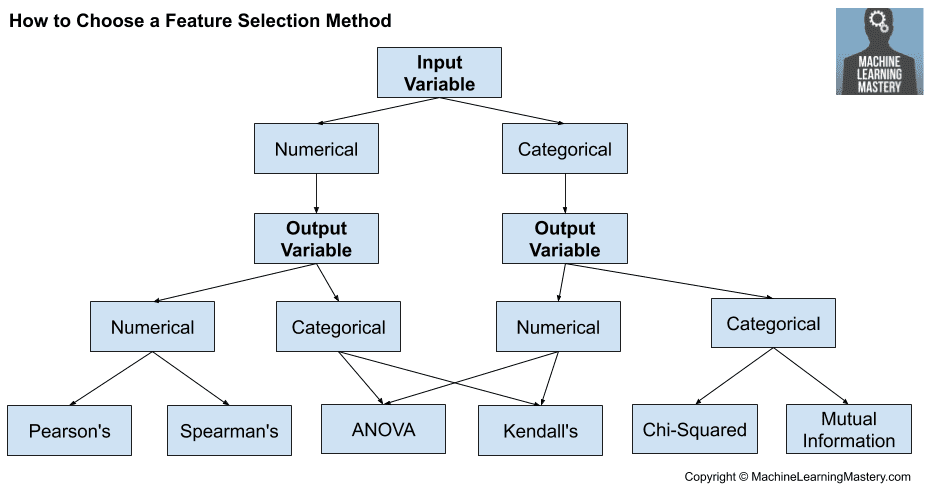

In machine learning the specific model you are using is the. As with AI machine learning vs. Feature Selection is the process used to select the input variables that are most important to your Machine Learning task.

Answer 1 of 3. These methods are easier to understand and interpret results. Deep learning is a faulty comparison as the latter is an integral part of the former.

Machine learning algorithms include supervised and unsupervised algorithms. Apart from choosing the right model for our data we need to choose the right data to put in our model. The learning algorithm finds patterns in the training data such that the input parameters correspond to the target.

This approach of feature selection uses Lasso L1 regularization and Elastic nets L1 and L2 regularization. Model parameters are about the weights and coefficient that is grasped from the data by the algorithm. These are adjustable parameters that must be tuned in order to obtain a model with optimal performance.

Suppose you have a dataset for detecting the class to which a particular flower belongs. As you know machines initially learn from the data that you give them. Parametric models are very fast to learn from data.

Simple Neural Networks. It can be broken down into 7 major steps. Benefits of Parametric Machine Learning Algorithms.

It may be defined as the number of correct predictions made as a ratio of all predictions made. Some techniques used are. These are the parameters in the model that must be determined using the training data set.

Are you fitting L1 regularized logistic regression for text model. They do not require as much training data and can work well even if the fit to the data is not perfect. The dimensionality of the input house.

The following snippet provides the python script used for the. A c c u r a c y T P T N 𝑇 𝑃 𝐹 𝑃 𝐹 𝑁 𝑇 𝑁. But it is actually really easy.

In programming you may pass a parameter to a function. Given some training data the model parameters are fitted automatically. Machine Learning Problem T P E.

The Machine Learning MCQ questions and answers are very useful for placements college university exams. The penalty is applied over the coefficients thus bringing down some. We can use accuracy.

Unsupervised machine learning algorithm program is used once the data accustomed train is neither classified nor labeled. I like the definition in Hands-on Machine Learning with Scikit and Tensorflow by Aurelian Geron where ATTRIBUTE DATA TYPE eg Mileage FEATURE DATA TYPE VALUE eg Mileage 50000 Regarding FEATURE versus PARAMETER based on the definition in Gerons book I used to interpret FEATURE as the variable and the PARAMETER as the. Like does it always prioritize what I put in param_grid.

Each feature or column represents a measurable piece of data. It is most common performance metric for classification algorithms. Regularization This method adds a penalty to different parameters of the machine learning model to avoid over-fitting of the model.

Machine learning is the scientific study of algorithms and statistical models to perform a specific task effectively without using explicit instructions.

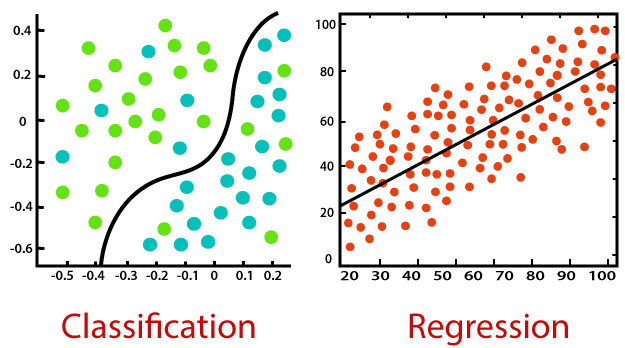

Regression And Classification Supervised Machine Learning Supervised Machine Learning Machine Learning Regression

How To Choose A Feature Selection Method For Machine Learning

Difference Between Statistics And Parameters Compare Statistics And Parameters Statistics Vs Parameters Data Science Research Methods Statistics Help

The 4 Machine Learning Models Imperative For Business Transformation Machine Learning Models Machine Learning Learning

Parameters Vs Hyperparameters In Machine Learning Youtube

What Is Machine Learning Definition How It Works Great Learning

Parameters For Feature Selection Machine Learning Dimensionality Reduction Learning

Regression Vs Classification In Machine Learning Javatpoint

Machine Learning As A Flow Kubeflow Vs Metaflow In 2021 Machine Learning Machine Learning Platform Learning

Dispelling Myths Deep Learning Vs Machine Learning

Feature Scaling Standardization Vs Normalization

How To Choose A Feature Selection Method For Machine Learning

Pin By Michael Lew On Iot Machine Learning Artificial Intelligence Learn Artificial Intelligence Artificial Intelligence Algorithms

Dispelling Myths Deep Learning Vs Machine Learning

L2 Regularization Machine Learning Glossary Machine Learning Machine Learning Methods Data Science